Overview | Features | Case Study

Technology | FAQ | Why Avinton?

Your Digital Transformation

Starts Here

We are now living in the era of Big Data and AI, where data lies at the foundation of all important business decisions and strategies.

In most cases, the existing infrastructure simply cannot cope with the scale and complexity of such data. This means that valuable information assets are wasted. Avinton Data Platform introduces advanced data management to generate significant business value from your data.

Fully interactive interface for data flows and data analytics

Wouldn't it be great if you could ...

store your Big Data in a secure, low-cost and scalable environment?

perform real-time analytics on your Big Data without incurring huge cloud-computing fees?

integrate the end-to-end data workflow on a single AI platform?

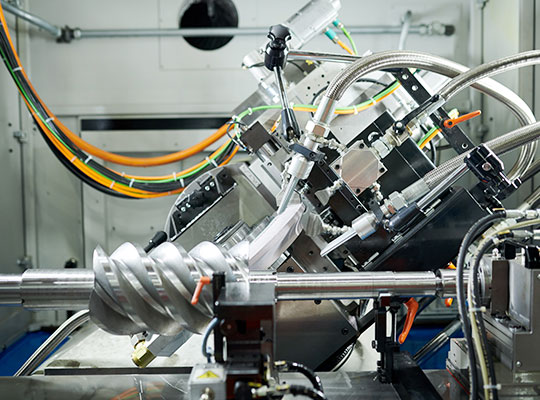

Big Data and AI in Production

Big Data, AI, Deep Learning, IoT ... these technologies are not just for the IT industry. In fact, nothing could be further from the truth. There is not an industry or company in the world in which there is no opportunity for digital transformation. Data is everywhere, and it's transforming workflows across all industries.

The ultimate goal of manufacturing companies can in most cases be consolidated to the increasing of quality and the reduction of cost. With the advent of IoT comes a whole new surge of potential in these areas. Data is being generated not only during the manufacturing process but also in the consumption phase.

Manufacturing firms are now able to collect valuable insight for reflecting in process improvement and user experience maximization. It is already being proven across domains that under certain situations, AI models trained to detect defects and predict faults can surpass their human peers.

Quality Assurance

Process Optimization

Fault Detection

Failure Analysis

Retail has always benefitted from the utilization of data. With the surge in e-commerce, retailers are able to track purchase records, segment customers, and make personalized recommendations as part of highly effective marketing campaigns.

Using data to predict trends and forecast demand can prove to be a huge cost saver, as retailers can manage warehouse inventories with much more efficiency. Chatbots and virtual assistants powered by AI are proving that they too have a cost benefit, by replacing the tasks of human workers and taking queries and handling customer services.

Customized Recommendations

Marketing Promotion

Inventory Control

Channel Optimization

Data is revolutionizing the healthcare industry. Machine vision is now being widely used to train models which can identify and predict diseases from medical images. Such diagnosis that would take an expert with tens of years of training and experience is now being supplemented and in future may even be replaced by AI.

The benefits of big data and AI in the field of healthcare are not just limited to the surgery room. Administrative costs like health records, billing, and medical coding are huge cost burdens which can be greatly reduced with automated approaches developed with AI.

Medical Imaging

Drug Development

Process Automation

Disease Detection

Finance and insurance are widely considered as intensively data-driven industries, with financial products and services designed based on complex calculations of risks and trends. Digital transformation and innovative data management tools present new business potential.

With the advent of Big Data and AI, predictive modeling is being leveraged to make smart decisions in areas such as investment and stock market analysis. Accumulated data from customer transactions are helping to mitigate operational risk, through the detection of fraudulent activities.

Customer Segmentation

Fraud Detection

Risk Management

Stock Trend Monitoring

The traditional farm may seem like the farthest away from these digital technologies, which is far from reality. Sustaining food production is a global challenge, with an ever-growing population and the advent of climate change.

Big Data and AI are playing an increasingly important role in the agriculture sector’s digital transformation process. Machine learning and computer vision are helping farmers increase productivity, such as through weed identification, yield forecasting, and process automation.

Supply Chain Optimization

Crop Health Monitoring

Yield Forecasting

Waste Reduction

Why Avinton Data Platform?

Smarter

AI and Machine Learning on-premise

Handle various types of data smoothly

Interactive dashboards with smart alerts

Ease of maintenance

Faster

Collect and analyze data in real-time

In-memory smart caching for high-speed performance

Share your data instantly and safely

Get rich insights and data visualization within just a few clicks

Cheaper

Avoid high cloud and license fees

Utilizing open-source technologies for reduced costs of ownership

Highly scalable to handle massive amounts of data

Deployable on commodity hardware

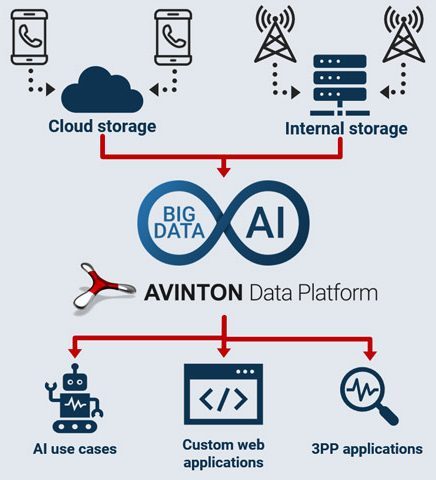

Avinton Data Platform is a scalable and economical data management solution for enterprises. We have spent significant efforts to take cutting-edge, complex technologies and make them more accessible.

Our solution utilizes the latest technologies in the field of Big Data and AI, to host the end-to-end data workflow on a single platform. It is the blueprint for your digital transformation.

Case Study: Revolutionizing Telecom with Big Data and AI

Avinton Data Platform is deployed in full production at a global networking and telecommunications company. It is serving as the core infrastructure for its digital transformation to a data-driven enterprise. The platform has demonstrated scalability on multiple occasions to handle an influx of ever more data and users, and its current capacity is already at hundreds of terabytes, and growing fast.

Today, Avinton Data Platform is collecting, accumulating, processing and analyzing data from mobile devices and network equipment across multiple countries on a daily basis. The data generates value for the user through intuitive visualization dashboards for tracking and predicting vital KPIs, as well as through trained AI models that use machine vision for on-site fault detection.

Featured Technologies

We utilize the latest technologies in the field of Big Data and AI. That includes Hadoop Distributed File System for the file system, Apache Spark for distributed data processing, and Kubeflow for ML Ops.

Data Collection

Data Storage

Data Analysis

Model Training

FAQ

Find answers to some frequently asked questions about our Avinton Data Platform.

How expensive is it to own Avinton Data Platform?

As with any solution, there is Capital Expenditure (CAPEX) and Operational Expenditure (OPEX). Our solution utilizes the latest yet proven open-source technologies, which do not require license fees to use like most vendor software and hence helps to lower OPEX in the long-run. At Avinton we are fully committed to the open-source ethos, by giving back to the developer community based on findings and enhancements achieved through our technology implementation.

In addition to this, the platform is designed to run on commodity hardware to lower CAPEX both in the initial deployment phase, as well as in latter phases when scaling out the platform. We are able to factor in availability of existing hardware which can be repurposed, to reduce the initial cost of deployment. Users will be able to proceed with the vendor of choice, and hardware pricing can start from as low as 5 Million Yen.

The actual total cost of deploying the platform will depend on various defining factors, such as the environment (cloud or on-premise), data volume, data retention period, number of users and so on. Please contact our sales representative through this form for quotations and demo requests based on individual requirements.

What if we have data in many different formats?

This is a very common issue that most enterprises are confronted with when handling Big Data. Studies reveal that less than 30% of raw data owned by businesses is usable as-is. This is where data wrangling comes in, and is usually the most important and time-consuming part of any data related project.

Avinton Data Platform can be packaged with customized data connectors, which can be used to parse and cleanse your data for the latter phases of the workflow. At Avinton we have data engineers with experience in latest technologies such as Apache Spark, the de facto standard when it comes to data processing. Automated data parsing ensures that users will have access to the latest cleansed data, which can be fed directly into BI dashboards or the like.

What if our data is all over the place?

Again, this is a very common issue when handling Big Data. The traditional approach would be the use of bash scripts, Perl scripts, SQL-stored procedures and the like to pull data from its source. However, with the advent of Big Data and IoT, updating such scripts and procedures is simply not scalable. The value of data depreciates quickly, and so a real-time solution is required.

Avinton Data Platform comes with an intuitive GUI which allows for data pipeline configuration. These automated pipelines can collect data from various sources, whether it be from the cloud, corporate systems, file servers or the like. Collecting data in one place enables users to blend them together for multi-dimensional data analytics.

What if the volume of data we are handling changes in the future?

The fact that Avinton Data Platform comprises of applications running on containers fully orchestrated by Kubernetes ensures that the architecture is very scalable to handle such changes during the lifetime of the platform.

Should there be a need for more physical resources like storage or CPU, it is a simple matter of adding nodes to the existing cluster which will require minimal downtime for the expansion activity.

How do we monitor the health of the overall platform?

The task of monitoring the health of not just the applications, but the actual nodes on which they are running is critical for the stability of the entire system.

Centralized monitoring is one of the defining features of our solution. Custom dashboards can be created to monitor standard health metrics (like CPU and memory usage) and logs, for both the applications and nodes. Smart alerting rules can also be implemented, to notify administrators of any health issues detected.

Can we benefit from the solution even if we have never used AI before?

Our solution is fully compatible with AI projects from end-to-end. There will be no need to prepare separately third-party AutoML applications, which tend to be limited in terms of modelling capacity and expensive to use. Moreover, the use of container-native workflows enables the deployment of AI models at scale, so that they can be used reliably by users accessing the system.

For users who have data at hand but are not confident as to whether it is sufficient to generate AI models, Avinton can provide AI consulting as a separate service. This will usually involve a short PoC (Proof of Concept) using sample data sets provided

Why Avinton?

Avinton has been handling data-orientated projects in various domains long before terms like Big Data and AI gained the degree of recognition that is seen today. Today, over half of the on-going projects are focusing on Big Data and AI solutions. By providing innovative data management and data analysis software, we equip our customers with all the tools required for successful digital transformation endeavours.

All of our development is done in-house; we never use subcontractors. We have a team of engineers rich in both age and ethnic diversity, with proven expertise in various domains of IT. This enables us to answer specific requirements and deliver production-ready solutions that generate value from day one. We can be fully confident at all times that the solutions delivered are of the highest quality and reliability.

We also provide technical consulting to various clients, from small companies (50~100 employees) to multinational enterprises. The team is managed by our lead consultant with over 15 years of experience in more than 10 different countries, to best advise customers on technical solutions to make the best use of their data assets.

We will be happy to provide you with more information.